Maybe @ericteubert can best explain himself?

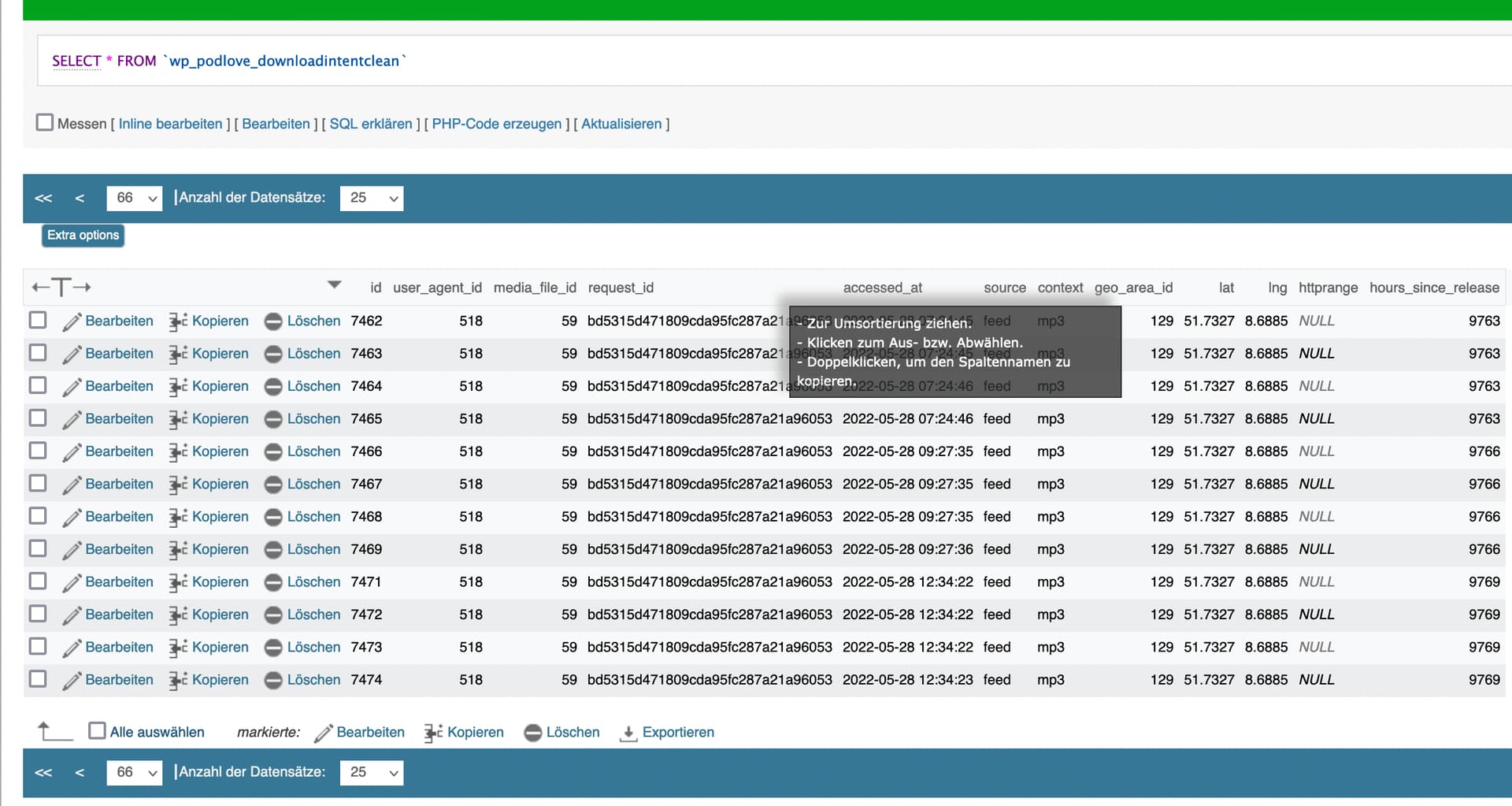

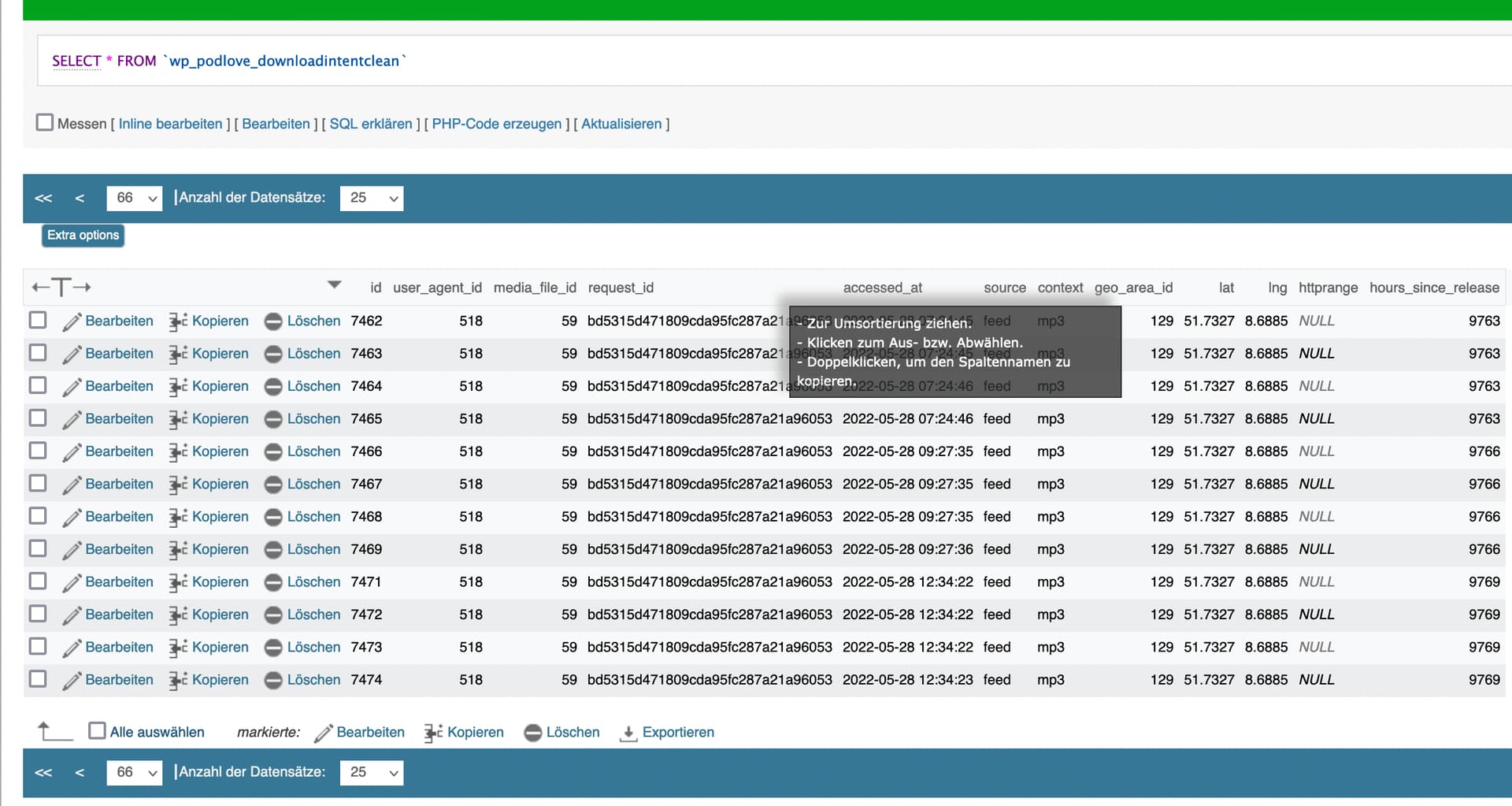

I have a sudden increment of downloads of a certain episode on my Podcast and wondered if this is really meaning a bunch of new listeners. But in the wp_podlove_downloadintentclean I found that there’s most time the exact same request_id again and again.

All the intents you see in the picture are counted seperately in the statistics (i.e. add 12 to the download number). But I would think, that at least the three to four intents from the exact same time should have been aggregated to one. Also I thought that one IP/request_ID should be blocked at least for 24h for this episode, shouldn’t it?

That does look wrong, yes.

Does that happen / stay wrong if you let the data recalculate? (Werkzeuge > Download-Absichten bereinigen)

Depends. Check: Experten Einstellungen > Tracking > Deduplikations-Zeitfenster, maybe this is set to hourly for you.

Strange. I’m sure I hit this button last week. And according to the Werkzeuge-Page the process happen quite regularly once an hour. But now after hitting it again it seems to have it corrected. The Deduplikations-Zeitfenster was set to daily but I despite saved the Tracking-Settings before having cleaned the download intents again - maybe there lacked something in the prefs?

But: The recalculated Downloads are again much lower than they have been. Maybe there were a lot of duplicates hidden before, maybe some more are identified now as bots? I don’t know but the ranking of my episodes has changed significantly even if it’s definitely not the first time I had it recalculated with the Werkzeuge buttons.

Well, that’s it for the moment  Thanks for your effort - next time I will push all the buttons before asking

Thanks for your effort - next time I will push all the buttons before asking

@ericteubert OK, One more question:

If I set the deduplication window to 24h and someone downloads all multiple episodes on this day. Does it count 1 DL for that IP on that day or 1DL per episode for that IP on that day?

Well it should not happen at all, so thanks for reporting

Deduplication is per file, so if there are 381 download intents for 20 different episodes during a day from the same client, it counts as 20 downloads.

1 Like

I can resume my report:

Today I checked the stats again and again in a very short time period the dl of that episode increased by 18 (which is a lot for my minor podcast  )

)

Because I learned my lesson I hit the buttons in Werkzeuge again et voilà: Those attempts got aggregated to one dl.

Checking the Hintergrund-Jobs table I disovered that the (partial) download intent cleaning job sometimes is reported with a time related string but sometimes with Never.

I then checked the WP cron diagnostics :

PHP Constants

ALTERNATE_WP_CRON: not defined

DISABLE_WP_CRON: not defined

Is https://plapperbu.de/wp-cron.php accessible? Yes, good!

Are scheduled crons run? Yes, good!

So it seems that the problem is that the download intent cleaning cron job doesn’t always work as it should and so leads to wrong statistics.

If I can do sth else to clear this up any better just let me know.

Update: Same happened today with another episode which was shown with 21 downloads. The cleaning job was reported as “Never” finished. After hitting the buttons the stats have been corrected to only one single download. So it’s definitely the lack of the clean download intent job. I had my WP instance updated to 6.0 lately … maybe there’s sth broken with jobs since then

Until now I had not a system cronjob but only when the site is visited. Maybe that’s the problem as I don’t have so many visitors. Anyways, I give it a try with a proper cron job that is fired once an hour. Will see if that fixes it.

No, using hourly cronjobs to trigger the wp-cron.php doesn’t change anything. Every morning I check the partial cleaning job finished “never” and lasted 0 to 0.1sec. There’s no recalculation of the download intents until I trigger it manually.

![]()